Cerebras Systems: The World’s First Wafer-Scale Processor for AI

Insights from Fabrica Ventures Team.

Good or Bad News?

December 11, 2021

Containers and Kubernetes Security

January 16, 2022Artificial Intelligence (AI) can be defined as “the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings” (Britannica).

The AI theme is very broad indeed but, independently of the method / analytical approach and the purpose of the application, tons of computing power are required. AI is profoundly computationally intensive, with AI compute demand doubling every 3-4 months. The scale in which AI operates is tremendous – think about the necessary computing power for connecting pixels from a video stream to a large statistical engine and getting out the commands to drive a car.

AI does not not work based on instructions (if-then-else type); it is not based on the classical von Neumann architecture of a central processing unit (CPU) giving orders. Instead, huge amounts of data flow over AI models and feedback loops show how to manipulate and transform them. Testing a single new hypothesis can take weeks or months. AI is all about data in motion.

AI demand is insatiable. AI is constrained by the availability of compute, not by applications or ideas. Indeed, the AI chips market is expected to grow by 7x until 2027 (Statista).

Nvidia, the most valuable chip designer in the world, is “all in” into AI. So, it is no surprise that, even with revenues 3.5x lower, Nvidia’s market cap is 3.5x Intel’s (the dethroned CPU king) – Nvidia’s $755B value is already similar to the sum of the 397 listed companies in the Bovespa.

However, when we look throughout the history of computing, it has never happened that a new important workload has come along and the correct answer to treat that workload was to use some pre-existing equipment that has been designed to do something else.

A Los Altos (CA) startup called Cerebras Systems is drastically changing the landscape of compute for AI. Differently from Nvidia’s GPUs which were initially designed for gaming, and backed by $723M of VC money, Cerebras undertook the ambitious task of designing a system from the ground up to accelerate AI applications.

Cerebras system is the world’s only purpose-built deep learning solution, consisting of innovations across 3 dimensions:

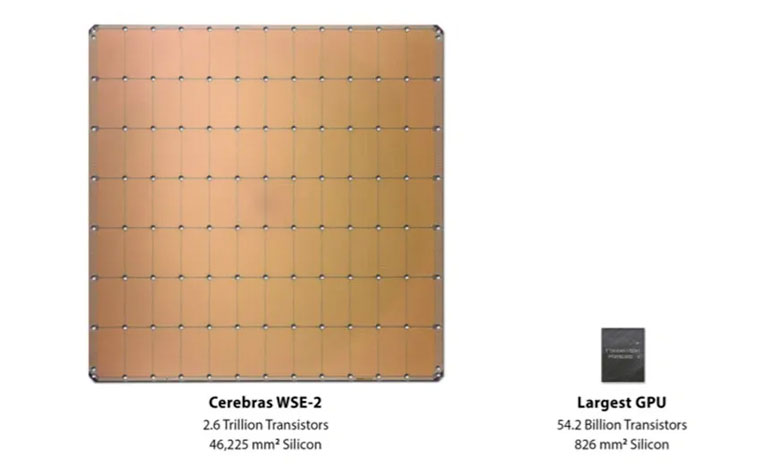

* The Wafer Scale Engine – the largest (of the size of a wafer) and fastest chip ever built and the only multi-trillion-transistor processor (it carries 340 transistors for every person on Earth)

* The computer system

* The software platform

Cerebras solution is now being used, on premises or cloud, by research labs and large companies all over the world. GlaxoSmithKline, for instance, is using Cerebras to make better predictions in drug discovery.

Conclusion

AI is transforming every aspect of technology.

AI has emerged as the most important computational workload of our generation.

Being located in the Silicon Valley, Fabrica Ventures could not pass Cerebras investment opportunity.